CSE576 Project 3: Face Detection

Testing recognition with cropped class

images

Step1

1.

Average Face

![]()

2.

Eigen Faces

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

![]()

Step2

Userbase

computed in this step

Step3

1.

Results with 10 eigen faces

Correct: 03, 04, 06, 10, 12, 16,

18, 19, 20, 21;

False: 01,

02, 05, 07, 08, 09, 11, 13, 14, 15, 17;

Accuracy: 10/21 = 48%

2.

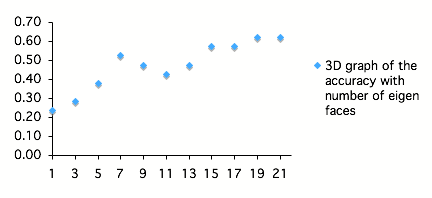

Results with 21 eigen faces (Accuracies)

1

eigan face: 5/21

3

eigan faces: 6/21

5

eigan faces: 8/21

7

eigan faces: 11/21

9

eigan faces: 10/21

11

eigan faces: 9/21

13

eigan faces: 10/21

15

eigan faces: 12/21

17

eigan faces: 12/21

19

eigan faces: 13/21

21

eigan faces: 13/21

3.

Plot

4.

Discussion

In our test, when 7

eigen faces are used, the method achieved a local maximum accuracy, and the

accuracy goes down with increasing number of eigen faces, and then it goes up

again when more then 15 eigen faces are used. It seems that 7 is a good number

to represent the faces in our database. The good accuracy of using more than 15

eigen faces, seems to be an overfitting of our dataset.

Cropping and Finding Faces

Step1

1.

Cropping result for elf.tga

2.

Cropping result for self.tga (self image)

![]()

Step2

1.

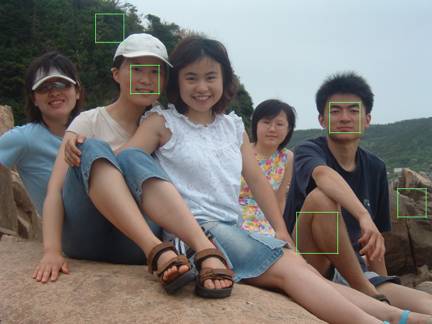

Detecting result for group.tga

2.

Detecting result for self_group.tga (self image)

Step3

Discussion:

Since the eigen faces are constructed based on non-smiling photos and none of

the photos have glasses, the detecting results are not able to deal with face

with glasses or face with dramatic expressions.

Extra Credits

1.

Verifying faces

We

tried to verify the 21 smiling images, each on the actual user and on another

user, to see if they can be correctly verified. So that is 21 tests whose results

are supposed to be “yes”, and 21 tests whose results are supposed to be “no”.

When

the MSE threshold is set to 60000, correctness of “yes” is 10/21 while

correctness for “no” is 21/21;

When

the MSE threshold is set to 80000, correctness of “yes” is 12/21 while

correctness for “no” is 21/21;

When

the MSE threshold is set to 100000, correctness of “yes” is 14/21 while

correctness for “no” is 19/21;

So

in our test, 80000 is the best threshold, because falsely verifying a face

image to be another user is much worse than unable to verifying a face image of

the correct user.

2.

Symmetry test for finding faces

We

added a step before verifying whether a sub-image is a face image. In the test,

we measure the degree of symmetry of the image and exclude the images that do

not have enough symmetry. This step is added based on the face that most face

image patches have very good symmetry attributes. The degree of symmetry here

is simply measured by summing up the absolute difference between two pixels at

symmetrical positions of a sub-image. Our results show that it can improve the

face detection time.