We segment objects during scene reconstruction rather than after as is usual (as in our past project

"3-D Object Discovery Using Motion"). We also update models of all objects and the background after each video frame, so that the robot's attention can be on other tasks while it makes these models, which can also be updated later using as much information as we can get from whatever camera views the robot happens to take.

Supplementary media for our ICRA14 paper "Toward Online 3-D Object Segmentation and Modeling"

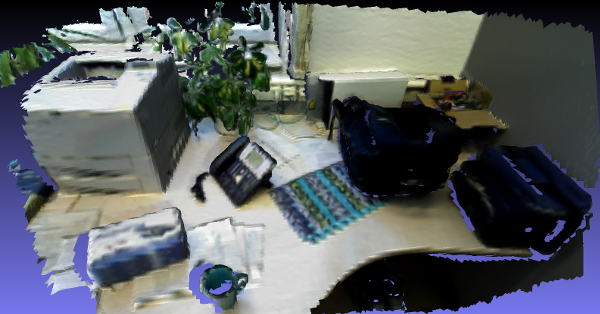

3-D maps (not used in the experiments in the paper; for visualization only) of all six scenes used in figure 3:

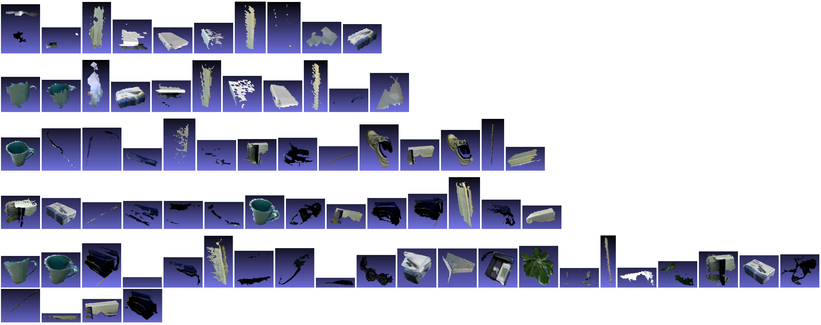

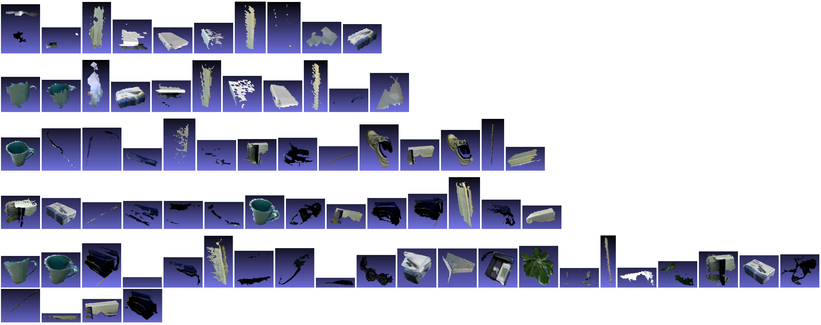

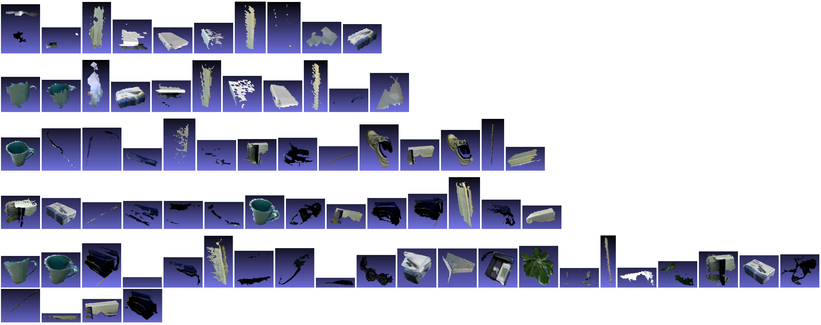

Comparison of object views segmented out by our off-line (blue background) and online (purple background) methods, supplementing the discussion in the manuscript:

Submission video:

Video of a segmentation demonstration:

Other Media

Videos demonstrating the usefulness of optimization with dense color and depth cues for alignment.

Submission video:

Video of a segmentation demonstration:

Submission video:

Video of a segmentation demonstration:

Submission video:

Video of a segmentation demonstration:

Submission video:

Video of a segmentation demonstration: